[social_warfare]

Twitter, Reddit, and other forums for ChatGPT evangelists (and catastrophists) have recently been brimming with enthusiasm for a novel use of the chatbot: therapy.

ChatGPT has done more for me, mentally, over the last month than any human therapist over the last decade, says one Reddit user.

GPT is a better therapist than any therapist I’ve ever tried, a widely-shared Tweet thread begins.

As a person who has both delivered thousands of hours of therapy, and benefitted from therapy at various points in my life, I’ve been intrigued (and, maybe, slightly defensive). Can ChatGPT really act as a therapist?

First things first: what is ChatGPT?

Perhaps you’re already knee-deep in ChatGPT. You’re up on all the latest news about it, you’re exploring a new career as a prompt engineer, ChatGPT is your closest friend, etc. If this is you, skip ahead.

Now, if you’re one of the many people out there who has heard a lot about this whole (air quotes) ChatGPT thing, and you’ve kind of been nodding along, but you don’t quite get it and now it’s too late to ask what it is without revealing your luddite status, this is a safe space.

Here’s a quick primer:

ChatGPT is an artificial intelligence (AI) chatbot. This means you can “talk to” it using regular, human language, and it will respond. To use it, you go to the OpenAI website. It looks remarkably similar to a regular, old messaging app like iMessage or, for the nostalgic millennials among us, AIM. The difference is that you’re chatting with an AI model, not your seventh-grade crush.

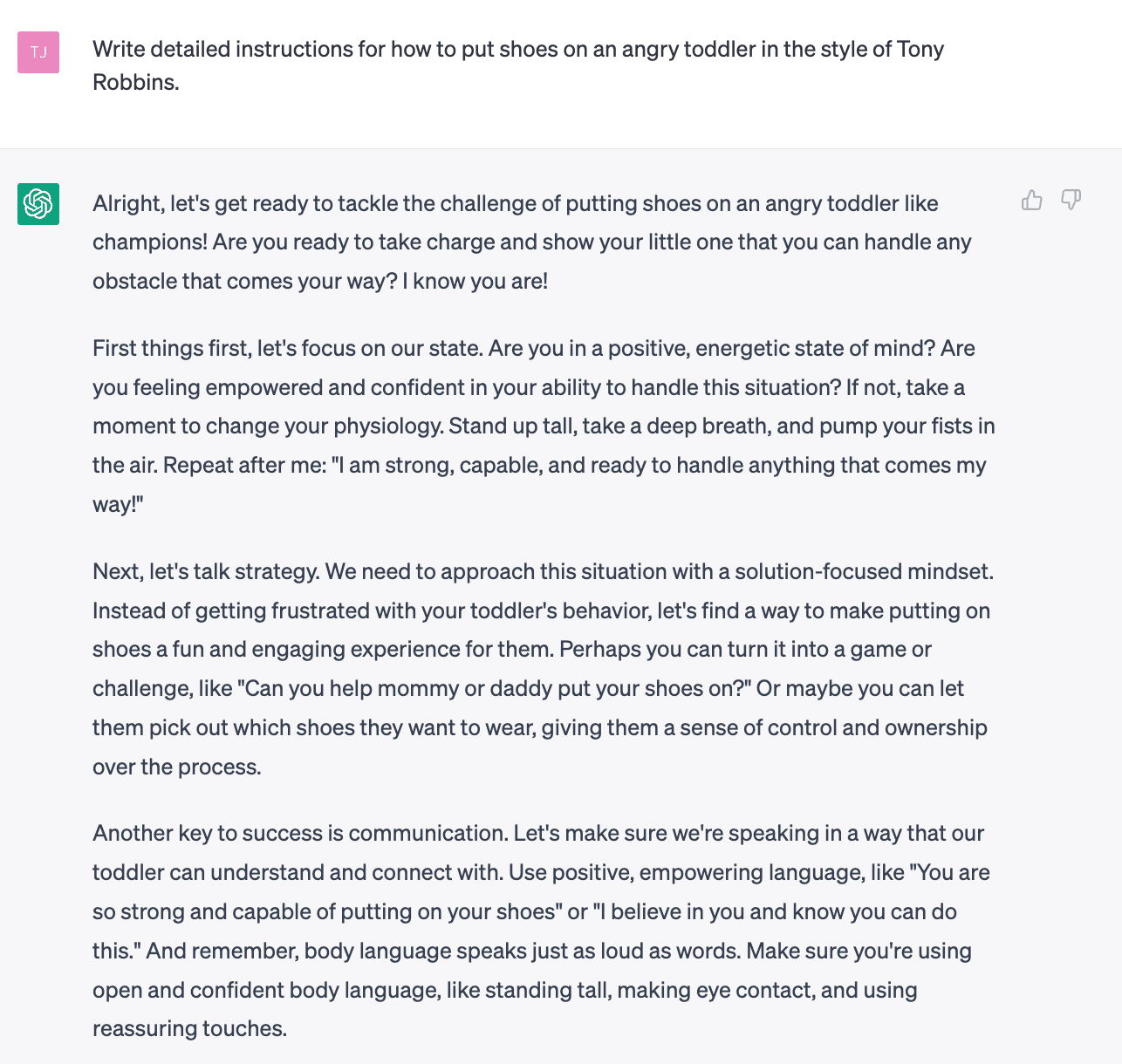

The messages you send ChatGPT are called “prompts.” Prompts can be simple questions (What should I write about for my newsletter this week?), or more lengthy instructions (Write detailed instructions for how to put shoes on an angry toddler in the style of Tony Robbins).

Here’s what it actually looks like:

ChatGPT was launched publicly in November 2022 by a company called OpenAI.

It is built on top of OpenAI’s “large language models” (LLMs), the latest of which is called GPT-4.

To greatly oversimplify, these LLMs are giant algorithms trained on billions of pieces of text from websites, books, news articles, etc., and refined with feedback from actual humans. These algorithms use that information to predict words and phrases that should come next in a sequence.

Also, it’s honestly pretty amazing. It’s grown to 100 million users faster than any other product in history. People are using it for everything from writing essays and emails, to brainstorming travel itineraries, to writing and checking code (and building websites). It is already reshaping the ways we live and work.

Cool. So, can it do therapy?

There are many, many topics we could discuss related to ChatGPT, and the opportunities (and risks) that come with it. But today, we’re focused on a specific question: can ChatGPT be used for therapy?

To be clear, this is happening already. Reddit’s ChatGPT subreddit is filled with examples of people testing out the chatbot as a therapist, and tips for getting the best therapeutic experience out of ChatGPT are common on social media. It’s easy to see why. Traditional therapy is notoriously inaccessible, expensive, and difficult to navigate. More than half of adults in the U.S. living with a mental illness are not getting the care they need.

ChatGPT is free and available anytime. It is nonjudgmental and knowledgeable about different forms of therapy. Ask ChatGPT for a list of strategies to manage your stress, and it delivers. Not to mention, it listens—or, at least, appears to listen—to everything you have to say.

Says one Reddit user: ChatGPT responded to my whole question. It didn’t just pick out one sentence and focus on that. I can’t even get a human therapist to do that. In a very scary way, I feel HEARD by ChatGPT.

Let me say up front: this technology can, and already is, providing immense value to people, and it will continue to get better with time. As an adjunct to therapy—a tool to ask questions, get ideas, problem-solve—it has the potential to change people’s lives.

But can it replace therapy? I don’t think so.

Why can’t it replace therapy?

The most obvious limitation is that ChatGPT is…not always right. To oversimplify again, large language models are designed not to produce answers that are necessarily factually correct, but to produce words and sentences that they predict should follow from our questions, based on patterns they have learned across billions of pieces of text.

Often, this distinction doesn’t actually matter much in practice—the answers it produces are correct. Sometimes, though, it really does matter. Like, for example, when you ask ChatGPT to produce academic sources on a given research topic, and it gives back a list of plausible-sounding, but entirely made up, journal article citations.

To be sure, accuracy will improve over time, as these models evolve and are tailored for specific use cases (like therapy). Whether they will eventually match (or exceed) that of medical professionals—and what we’ll do about it when they do—I don’t know.

As it currently stands, ChatGPT cannot provide true medical advice. It cannot diagnose mental health concerns, and it cannot come up with a medically-sound treatment plan. Of course, sometimes it will provide these things, and they will be correct. But sometimes it won’t, and often, we won’t know the difference.

The elephant (therapist) in the room

The other limitation of using ChatGPT as a replacement for therapy is maybe less obvious, and points to a larger truth about how therapy works.

You’re likely aware that there are many different kinds of therapy out there, with an alphabet soup of acronyms to accompany them—CBT, ACT, DBT, IPT, ERP, BPT to name a few. Some types of therapy have more evidence than others to support their efficacy, and some are more strongly indicated for certain concerns (e.g., depression, trauma) than others.

At the end of the day, though, multiple meta-analyses summarizing hundreds of studies find that one, single factor is one of the most critical to treatment success: therapeutic alliance.

According to most definitions, the therapeutic alliance is “a collaborative relationship between therapist and patient that is influenced by the extent to which there is agreement on treatment goals, a defined set of therapeutic tasks or processes to achieve the stated goals, and the formation of a positive emotional bond.”

Put simply: a good relationship with your therapist.

One of the incredible things about ChatGPT is that we can mold it into exactly what we want it to be. We can give it instructions, shape its responses, tell it to give us answers that are longer or shorter, more empathetic, more actionable, more like how Ryan Reynolds would talk. Perhaps we want our chatbot therapist to simply ask questions and listen. We can tell it to do that. Or maybe we’d rather it just cut to the chase and give us a list of strategies for dealing with our annoying co-worker. We can tell it to do that, too.

But here’s the thing about relationships: they need at least two people to work. Both people, even if one of those people is a therapist, need to have their own thoughts and feelings and preferences and boundaries. If one side of the relationship is being continuously molded and shaped to fit the needs of the other, that’s not a relationship.

Often, what we want in another person is not actually what we need. We might want our therapist to give us a list of strategies for dealing with our annoying co-worker, but what we might need are a few gentle questions about how our own behavior is contributing to the situation. What we might want is a therapist who says “no worries!” when we cancel last-minute every week, but what we might need is one who is honest about the inconvenience, and then helps us figure out why it keeps happening.

Establishing a therapeutic alliance requires a real, two-sided relationship.

As one Reddit user says of the downside of their ChatGPT therapist:

Cons: It can simulate sympathy, but it is not a person.

Of course, I had to try it for myself

A few nights ago, I wrapped myself in a blanket, lay down on the couch, and popped open my laptop for my first-ever chatbot therapy session.

“You’re a therapist with expertise in cognitive behavioral therapy,” I wrote to ChatGPT, “guide me through a session.”

Over the next 20 minutes, ChatGPT provided me with an impressive array of suggestions and skills. It suggested deep breathing, progressive muscle relaxation, and mindfulness meditation to manage stress. It walked me through challenging my negative self-talk. Things got (too) real when it told me I needed to make sure I’m setting appropriate boundaries to balance work and family time. I was—and am—hugely impressed by what this technology can do, by its depth of knowledge about various therapy modalities, by its speed and efficiency, and, of course, by its ability to communicate using natural language.

I would absolutely use it again to get ideas for how to handle difficult situations, to prompt me to think about things in a different way, to gather tips for managing negative emotions—but ultimately, it was not therapy. It just seemed that something was missing.

Of course, I might be biased. After all, I’m only human.

Another difference between ChatGPT and AIM: no moody away messages featuring Avril Lavigne lyrics.

If nothing else, this has convinced me that all tasks involving angry toddlers would be improved by Tony Robbins. Can you imagine if every time we tried putting shoes on our kids we would first “stand up tall,” “pump our fists in the air,” and shout “I am strong, capable, and ready to handle anything that comes my way!”? Might give it a try.

OpenAI was founded by Sam Altman, whose credentials include co-founding Loopt and Worldcoin, and serving as president of Y Combinator. One of the most interesting facts about him, though, is that Wikipedia doesn’t know his actual birthday. He is listed as 37 or 38. A true man of mystery!

One of my favorite aspects of this technology is the predictable emergence of the “never impressed” people, who immediately upon its release, were pointing out its technical flaws. I asked ChatGPT to write 15 jokes, they’ll say, And sure, they were funny and perfectly written and available in less than 4 seconds. But it doesn’t know anything after September 2021, so some of the jokes were SO dated. What?! A human-like robot is literally writing jokes for us, and we’re complaining about how they’re slightly dated?

ChatGPT is not the only AI Chatbot out there, but it is currently the most popular. Another example is Google Bard, which uses Google’s own model LAMDA, of “the robots are alive” fame. Here’s a weird thing that happened when Bard was publicly released in February: The company ran an ad on its Twitter feed that included a GIF demonstrating how the chatbot works. In the ad, a user prompts: “What new discoveries from the James Webb Space Telescope can I tell my 9 year old about?” The chatbot responds with a few such discoveries, including the idea that the telescope took “the very first pictures” of “exoplanets,” or planets outside of earth’s solar system. This fact, unfortunately, is wrong. It turns out the James Webb Space Telescope was not the first to take pictures of exoplanets. The Internet, of course, lost its mind. The whole thing is odd because it feels like it could have been avoided by just, you know, Googling it? I can only imagine the drama that unfolded among the exoplanet enthusiast community.

Quick note to students and academics: do not use ChatGPT to generate citations (at least, not yet). Here’s an article on this called ChatGPT and Fake Citations from the Duke University Library, which, in an unexpected twist, begins with an “evil Kermit meme” about the situation.

[social_warfare]

Keep Reading

Want more? Here are some other blog posts you might be interested in.